Brokerage AI Tools Are Here. Without Standards, Adoption Turns Into Chaos.

education

education

AI tools are rolling out across real estate brokerages fast. The intent is good: less time writing, faster marketing, quicker follow-up, better leverage.

But what happens next is predictable.

Some agents adopt immediately. Some refuse. Others use it casually, with their own prompts, their own tone, and their own assumptions. Leadership can’t tell what’s working, marketing can’t keep things consistent, and compliance starts catching mistakes after content is already public.

The tool isn’t the core issue. The operating system around the tool is.

You’ll recognize this pattern:

One group uses AI weekly. Another group avoids it completely.

Prompts vary wildly, so outputs vary wildly.

Brand voice becomes inconsistent across listings, social posts, and emails.

Compliance language is missing, changed, or added inconsistently.

AI-generated content gets posted or sent without review.

A common pattern we see is 30–60% weekly adoption during the first month after a rollout. That might sound fine until you realize the business is now producing content through two different systems: “AI-assisted” and “manual,” with no shared rules for either.

The fastest way to get burned with AI is letting it produce “confident-but-wrong” details.

AI will fill in gaps. It will infer. It will guess. And it will sound certain while doing it.

Common failure points:

Property facts: HOA details, fees, assessments, square footage, lot size, permits, upgrades, tax info.

Location claims: school boundaries, “walkable to,” commute time, neighborhood labels.

Compliance drift: missing required disclosures, inconsistent brokerage identifiers, off-brand claims. For California-specific advertising requirements, the California Department of Real Estate’s advertising guidelines are a solid baseline.

Fair housing risk: language like “perfect for families,” “exclusive,” “safe neighborhood,” or coded phrasing that can create issues. If you’re tightening language standards, it helps to reference fair housing guidance from HUD and California’s Civil Rights Department.

Because AI can generate plausible but incorrect details, a verification step isn’t optional—it’s basic risk control. NIST’s AI Risk Management Framework is a good reference point on why guardrails and review matter.

Even when the output is “mostly fine,” it often creates a hidden cost: revisions. If AI saves 10 minutes but generates 20 minutes of cleanup, you didn’t save time—you just moved the work.

Most agents don’t need more “AI training.” They need guardrails.

The agents who get the most value tend to do one boring thing well:

They standardize inputs and standardize review.

That’s it.

They treat AI like an assistant, not an expert. And they build a lightweight system so the output is usable, consistent, and safe.

If you want AI leverage without chaos, set up a simple workflow that anyone can follow.

When every agent prompts differently, you’ll get different tone, different claims, and different risk.

Create a small set of approved prompt templates for the most common use cases:

Listing description

Instagram caption

Client follow-up email (buyer or seller)

These prompts should include:

Desired tone (plain, confident, no hype)

Style rules (no exaggeration, no “#1,” no guarantees)

Prohibited language (including fair housing-sensitive phrases)

Required elements (disclosures, identifiers, etc., if applicable)

The goal isn’t perfection. The goal is consistency.

Before anyone runs the prompt, require a short “fact box” pasted above it. This is where the agent supplies verified information.

A simple fact box might include:

Address (or “public” vs “private” handling guidance)

Verified square footage source (MLS / assessor / appraisal / builder)

HOA status: verified / not verified (and if verified, source)

Key features to highlight (3–6 bullets)

Known restrictions: disclosures, permit uncertainty, “do not mention”

Required disclaimers/branding elements (if applicable)

This one step prevents most of the confident inaccuracies that create cleanup and risk.

AI output should not go straight to the public.

A fast, repeatable checklist is the difference between “AI leverage” and “AI liability.”

Here’s a simple checklist you can use for almost anything:

Facts verified: anything measurable or specific is confirmed (yes/no)

Fair housing-safe language: no protected-class references or coded language (yes/no)

Required disclosures/branding: present and correct (yes/no)

Tone check: not hypey, not absolute, not misleading (yes/no)

If any item is “no,” revise before publishing or sending.

This isn’t bureaucracy. It’s quality control.

If you’re an agent, a team lead, or someone supporting agents operationally, here’s a simple setup you can implement in under 30 minutes:

Pick three use cases you want consistent this month:

Listing descriptions

Social captions

Buyer follow-up emails

Write one approved prompt template per use case (keep them short).

Include tone rules

Include “don’t guess facts”

Include prohibited language examples

Create one fact box template that’s copied into every prompt.

Add “verified/not verified” toggles for anything that’s commonly wrong (HOA, permits, etc.)

Add the 60-second review checklist at the bottom of each template.

That’s the guardrails kit. It’s simple, repeatable, and it scales.

If you don’t track anything, the rollout becomes a vibe. You’ll get opinions, not insight.

You don’t need a complicated dashboard. Track a few basic indicators for two weeks and you’ll see what’s happening.

Good starter metrics:

Weekly usage rate: % of agents using the approved AI tool/templates weekly

AI-related corrections: number of marketing or compliance fixes tied to AI output

Revision cycles: average number of edits per listing description or post (often 1–3 is normal; higher means the prompt is poor or the input is incomplete)

Brand compliance score: a simple 1–5 rating based on required elements + tone + accuracy

If you only track two numbers, track these:

Weekly usage rate

AI-related corrections

Those two tell you whether adoption is stabilizing and whether quality is improving.

Chaos adoption becomes most visible during:

Spring listing season (higher volume content, tighter timelines)

Right after a new tool launch (experimentation spikes, standards lag)

High volume is when weak systems break. If your process depends on people “being careful,” it won’t hold when everyone is busy.

The goal is a system that still works when the market heats up.

AI is useful. It can reduce blank-page time, speed up drafts, and keep you moving.

But unmanaged AI creates two expensive outcomes:

Risk: fair housing issues, inaccuracies, brand misstatements

Drag: revision cycles, rework, and confusion about what “good” looks like

If you want speed without chaos, don’t focus on the tool.

Focus on the operating system:

Standard prompts

Verified fact boxes

60-second review

That’s how you get consistency, safety, and actual time savings—without turning your marketing into a game of cleanup.

Stay up to date on the latest real estate trends.

Buyer

Buyer

8 Seconds....

education

A simple operating system for speed, consistency, and compliance

Buyer

education

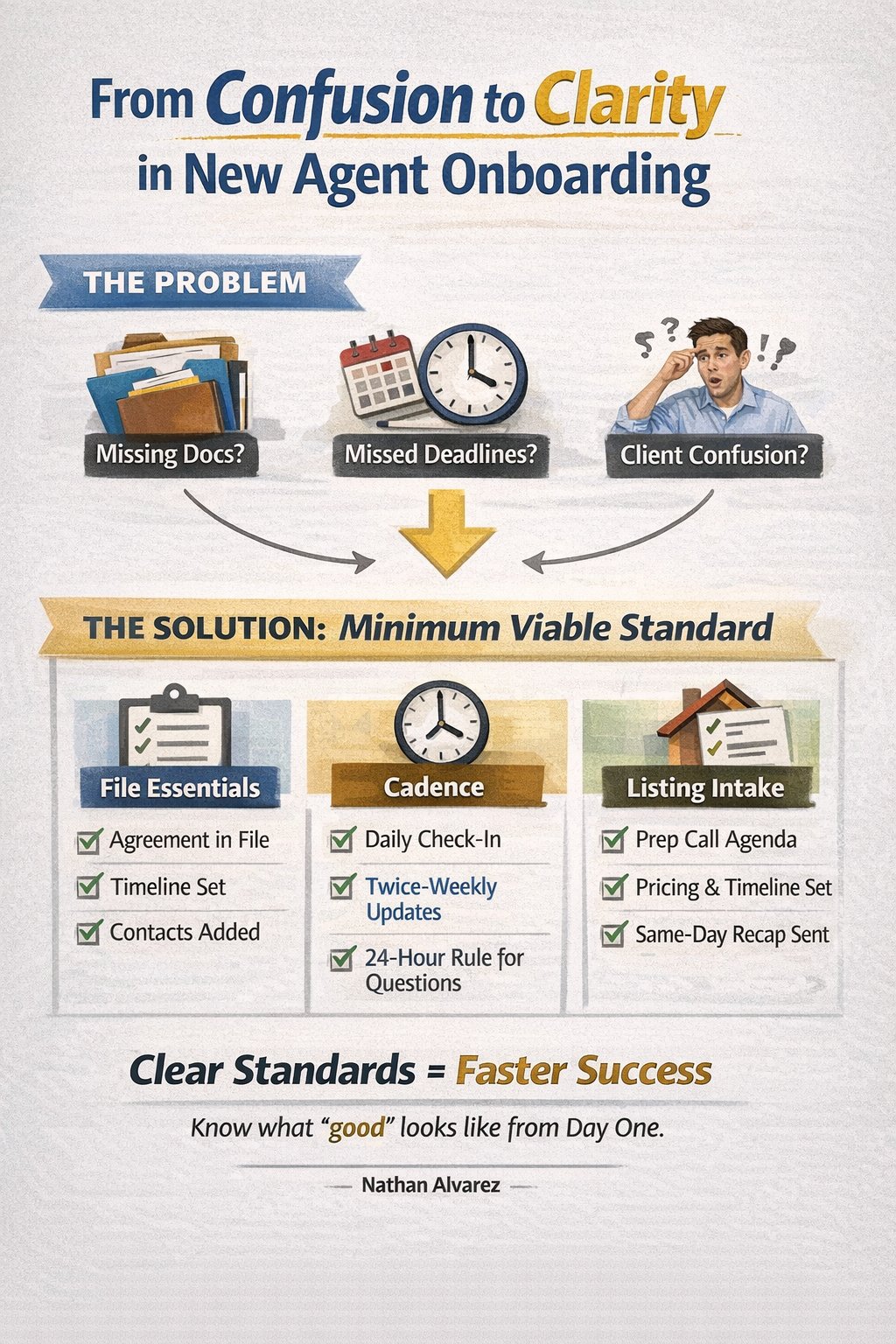

A 20-minute standard can prevent a lot of manager rescues and compliance kickbacks.

lifestyle

Homes have a funny way of evolving right alongside us... sometimes even ahead of us.

Guide

A Hidden Foothill Gem in Los Angeles County

education

Real talk on timing, demand, and one winter-smart financing option that can make buyers say “yes.”

education

Simple ways families can pass property without a long, costly court process.